注意:本页面引用了 Camera2 软件包。除非您的应用需要 Camera2 的特定低级功能,否则我们建议使用 CameraX。CameraX 和 Camera2 都支持 Android 5.0 (API 级别 21) 及更高版本。

多摄像头功能随 Android 9(API 级别 28)一起推出。自发布以来,支持该 API 的设备已上市。许多多摄像头用例与特定的硬件配置紧密耦合。换句话说,并非所有用例都与每台设备兼容,这使得多摄像头功能成为 Play 功能交付的理想选择。

一些典型用例包括

- 变焦:根据裁剪区域或所需的焦距在摄像头之间切换。

- 深度:使用多个摄像头构建深度图。

- 虚化:使用推断的深度信息模拟类似单反的窄景深。

逻辑摄像头和物理摄像头之间的区别

理解多摄像头 API 需要理解逻辑摄像头和物理摄像头之间的区别。举例来说,考虑一个带有三个后置摄像头的设备。在此示例中,这三个后置摄像头中的每一个都被视为一个物理摄像头。然后,逻辑摄像头是其中两个或更多物理摄像头的分组。逻辑摄像头的输出可以是来自其中一个底层物理摄像头的流,也可以是同时来自多个底层物理摄像头的融合流。无论哪种方式,该流都由摄像头硬件抽象层 (HAL) 处理。

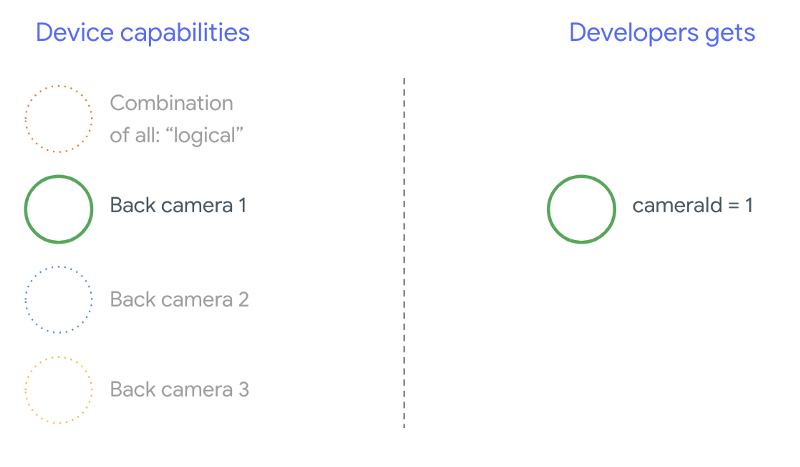

许多手机制造商开发第一方相机应用,这些应用通常预装在他们的设备上。为了使用硬件的所有功能,他们可能会使用私有或隐藏 API,或者从驱动程序实现中获得其他应用无法访问的特殊处理。一些设备通过提供来自不同物理摄像头的融合帧流来实现逻辑摄像头的概念,但仅限于某些特权应用。通常,只有一个物理摄像头暴露给框架。Android 9 之前第三方开发者的状况如下图所示

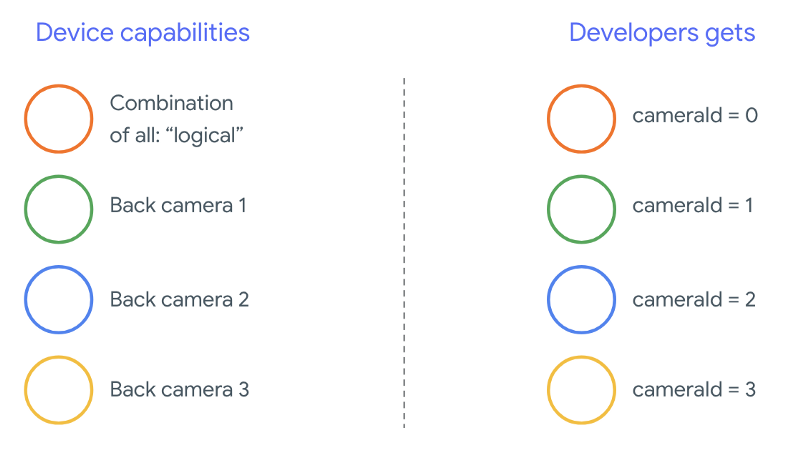

从 Android 9 开始,Android 应用不再允许使用私有 API。随着框架中多摄像头支持的加入,Android 最佳实践强烈建议手机制造商为所有朝向相同方向的物理摄像头暴露一个逻辑摄像头。第三方开发者应期望在运行 Android 9 及更高版本的设备上看到以下情况

逻辑摄像头提供的功能完全取决于 OEM 对相机 HAL 的实现。例如,像 Pixel 3 这样的设备实现了其逻辑摄像头,它根据请求的焦距和裁剪区域选择其物理摄像头之一。

多摄像头 API

新 API 添加了以下新常量、类和方法

CameraMetadata.REQUEST_AVAILABLE_CAPABILITIES_LOGICAL_MULTI_CAMERACameraCharacteristics.getPhysicalCameraIds()CameraCharacteristics.getAvailablePhysicalCameraRequestKeys()CameraDevice.createCaptureSession(SessionConfiguration config)CameraCharacteritics.LOGICAL_MULTI_CAMERA_SENSOR_SYNC_TYPEOutputConfiguration和SessionConfiguration

由于 Android 兼容性定义文档 (CDD) 的更改,多摄像头 API 也附带了开发者的一些期望。双摄像头设备在 Android 9 之前就已经存在,但同时打开多个摄像头涉及反复试验。在 Android 9 及更高版本上,多摄像头提供了一组规则,用于指定何时可以打开属于同一逻辑摄像头的一对物理摄像头。

在大多数情况下,运行 Android 9 及更高版本的设备会暴露所有物理摄像头(除了红外等不常见的传感器类型),以及一个更易于使用的逻辑摄像头。对于每种保证可用的流组合,属于逻辑摄像头的一个流可以被来自底层物理摄像头的两个流替换。

同时使用多个流

同时使用多个相机流涵盖了在单个相机中同时使用多个流的规则。除了一个值得注意的补充外,同样的规则也适用于多个相机。CameraMetadata.REQUEST_AVAILABLE_CAPABILITIES_LOGICAL_MULTI_CAMERA 解释了如何用两个物理流替换一个逻辑 YUV_420_888 或原始流。也就是说,每个 YUV 或 RAW 类型的流都可以被两个相同类型和大小的流替换。您可以从单摄像头设备的以下保证配置的相机流开始

- 流 1:YUV 类型,来自逻辑摄像头

id = 0的MAXIMUM大小

然后,支持多摄像头的设备允许您创建一个会话,将该逻辑 YUV 流替换为两个物理流

- 流 1:YUV 类型,来自物理摄像头

id = 1的MAXIMUM大小 - 流 2:YUV 类型,来自物理摄像头

id = 2的MAXIMUM大小

当且仅当这两个摄像头是逻辑摄像头分组的一部分时,您才能将 YUV 或 RAW 流替换为两个等效流,这些分组列在 CameraCharacteristics.getPhysicalCameraIds() 下。

框架提供的保证只是同时从多个物理摄像头获取帧所需的最低限度。大多数设备都支持额外的流,有时甚至允许独立打开多个物理摄像头设备。由于这并非框架的硬性保证,因此需要通过反复试验进行逐设备测试和调整。

使用多个物理摄像头创建会话

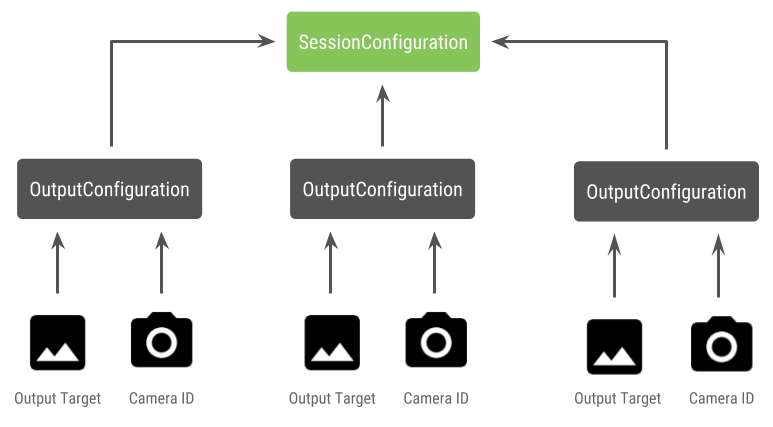

在启用多摄像头的设备上使用物理摄像头时,打开单个 CameraDevice(逻辑摄像头)并在单个会话中与其交互。使用 API CameraDevice.createCaptureSession(SessionConfiguration config) 创建单个会话,该 API 在 API 级别 28 中添加。会话配置包含多个输出配置,每个配置都有一组输出目标,并且可以选择一个所需的物理摄像头 ID。

捕获请求与其关联着一个输出目标。框架根据所附加的输出目标来确定请求发送到哪个物理(或逻辑)摄像头。如果输出目标与作为输出配置随物理摄像头 ID 一起发送的输出目标之一对应,则该物理摄像头接收并处理该请求。

使用一对物理摄像头

多摄像头相机 API 的另一个新增功能是能够识别逻辑摄像头并找到它们背后的物理摄像头。您可以定义一个函数来帮助识别潜在的物理摄像头对,您可以使用这些摄像头对来替换其中一个逻辑摄像头流

Kotlin

/** * Helper class used to encapsulate a logical camera and two underlying * physical cameras */ data class DualCamera(val logicalId: String, val physicalId1: String, val physicalId2: String) fun findDualCameras(manager: CameraManager, facing: Int? = null): List{ val dualCameras = MutableList () // Iterate over all the available camera characteristics manager.cameraIdList.map { Pair(manager.getCameraCharacteristics(it), it) }.filter { // Filter by cameras facing the requested direction facing == null || it.first.get(CameraCharacteristics.LENS_FACING) == facing }.filter { // Filter by logical cameras // CameraCharacteristics.REQUEST_AVAILABLE_CAPABILITIES_LOGICAL_MULTI_CAMERA requires API >= 28 it.first.get(CameraCharacteristics.REQUEST_AVAILABLE_CAPABILITIES)!!.contains( CameraCharacteristics.REQUEST_AVAILABLE_CAPABILITIES_LOGICAL_MULTI_CAMERA) }.forEach { // All possible pairs from the list of physical cameras are valid results // NOTE: There could be N physical cameras as part of a logical camera grouping // getPhysicalCameraIds() requires API >= 28 val physicalCameras = it.first.physicalCameraIds.toTypedArray() for (idx1 in 0 until physicalCameras.size) { for (idx2 in (idx1 + 1) until physicalCameras.size) { dualCameras.add(DualCamera( it.second, physicalCameras[idx1], physicalCameras[idx2])) } } } return dualCameras }

Java

/** * Helper class used to encapsulate a logical camera and two underlying * physical cameras */ final class DualCamera { final String logicalId; final String physicalId1; final String physicalId2; DualCamera(String logicalId, String physicalId1, String physicalId2) { this.logicalId = logicalId; this.physicalId1 = physicalId1; this.physicalId2 = physicalId2; } } ListfindDualCameras(CameraManager manager, Integer facing) { List dualCameras = new ArrayList<>(); List cameraIdList; try { cameraIdList = Arrays.asList(manager.getCameraIdList()); } catch (CameraAccessException e) { e.printStackTrace(); cameraIdList = new ArrayList<>(); } // Iterate over all the available camera characteristics cameraIdList.stream() .map(id -> { try { CameraCharacteristics characteristics = manager.getCameraCharacteristics(id); return new Pair<>(characteristics, id); } catch (CameraAccessException e) { e.printStackTrace(); return null; } }) .filter(pair -> { // Filter by cameras facing the requested direction return (pair != null) && (facing == null || pair.first.get(CameraCharacteristics.LENS_FACING).equals(facing)); }) .filter(pair -> { // Filter by logical cameras // CameraCharacteristics.REQUEST_AVAILABLE_CAPABILITIES_LOGICAL_MULTI_CAMERA requires API >= 28 IntPredicate logicalMultiCameraPred = arg -> arg == CameraCharacteristics.REQUEST_AVAILABLE_CAPABILITIES_LOGICAL_MULTI_CAMERA; return Arrays.stream(pair.first.get(CameraCharacteristics.REQUEST_AVAILABLE_CAPABILITIES)) .anyMatch(logicalMultiCameraPred); }) .forEach(pair -> { // All possible pairs from the list of physical cameras are valid results // NOTE: There could be N physical cameras as part of a logical camera grouping // getPhysicalCameraIds() requires API >= 28 String[] physicalCameras = pair.first.getPhysicalCameraIds().toArray(new String[0]); for (int idx1 = 0; idx1 < physicalCameras.length; idx1++) { for (int idx2 = idx1 + 1; idx2 < physicalCameras.length; idx2++) { dualCameras.add( new DualCamera(pair.second, physicalCameras[idx1], physicalCameras[idx2])); } } }); return dualCameras; }

物理摄像头状态处理由逻辑摄像头控制。要打开“双摄像头”,请打开与物理摄像头对应的逻辑摄像头

Kotlin

fun openDualCamera(cameraManager: CameraManager, dualCamera: DualCamera, // AsyncTask is deprecated beginning API 30 executor: Executor = AsyncTask.SERIAL_EXECUTOR, callback: (CameraDevice) -> Unit) { // openCamera() requires API >= 28 cameraManager.openCamera( dualCamera.logicalId, executor, object : CameraDevice.StateCallback() { override fun onOpened(device: CameraDevice) = callback(device) // Omitting for brevity... override fun onError(device: CameraDevice, error: Int) = onDisconnected(device) override fun onDisconnected(device: CameraDevice) = device.close() }) }

Java

void openDualCamera(CameraManager cameraManager, DualCamera dualCamera, Executor executor, CameraDeviceCallback cameraDeviceCallback ) { // openCamera() requires API >= 28 cameraManager.openCamera(dualCamera.logicalId, executor, new CameraDevice.StateCallback() { @Override public void onOpened(@NonNull CameraDevice cameraDevice) { cameraDeviceCallback.callback(cameraDevice); } @Override public void onDisconnected(@NonNull CameraDevice cameraDevice) { cameraDevice.close(); } @Override public void onError(@NonNull CameraDevice cameraDevice, int i) { onDisconnected(cameraDevice); } }); }

除了选择要打开哪个摄像头外,此过程与过去 Android 版本中打开摄像头的方式相同。使用新的会话配置 API 创建捕获会话会告诉框架将某些目标与特定物理摄像头 ID 关联起来

Kotlin

/** * Helper type definition that encapsulates 3 sets of output targets: * * 1. Logical camera * 2. First physical camera * 3. Second physical camera */ typealias DualCameraOutputs = Triple<MutableList?, MutableList?, MutableList?> fun createDualCameraSession(cameraManager: CameraManager, dualCamera: DualCamera, targets: DualCameraOutputs, // AsyncTask is deprecated beginning API 30 executor: Executor = AsyncTask.SERIAL_EXECUTOR, callback: (CameraCaptureSession) -> Unit) { // Create 3 sets of output configurations: one for the logical camera, and // one for each of the physical cameras. val outputConfigsLogical = targets.first?.map { OutputConfiguration(it) } val outputConfigsPhysical1 = targets.second?.map { OutputConfiguration(it).apply { setPhysicalCameraId(dualCamera.physicalId1) } } val outputConfigsPhysical2 = targets.third?.map { OutputConfiguration(it).apply { setPhysicalCameraId(dualCamera.physicalId2) } } // Put all the output configurations into a single flat array val outputConfigsAll = arrayOf( outputConfigsLogical, outputConfigsPhysical1, outputConfigsPhysical2) .filterNotNull().flatMap { it } // Instantiate a session configuration that can be used to create a session val sessionConfiguration = SessionConfiguration( SessionConfiguration.SESSION_REGULAR, outputConfigsAll, executor, object : CameraCaptureSession.StateCallback() { override fun onConfigured(session: CameraCaptureSession) = callback(session) // Omitting for brevity... override fun onConfigureFailed(session: CameraCaptureSession) = session.device.close() }) // Open the logical camera using the previously defined function openDualCamera(cameraManager, dualCamera, executor = executor) { // Finally create the session and return via callback it.createCaptureSession(sessionConfiguration) } }

Java

/** * Helper class definition that encapsulates 3 sets of output targets: ** 1. Logical camera * 2. First physical camera * 3. Second physical camera */ final class DualCameraOutputs { private final List

logicalCamera; private final List firstPhysicalCamera; private final List secondPhysicalCamera; public DualCameraOutputs(List logicalCamera, List firstPhysicalCamera, List third) { this.logicalCamera = logicalCamera; this.firstPhysicalCamera = firstPhysicalCamera; this.secondPhysicalCamera = third; } public List getLogicalCamera() { return logicalCamera; } public List getFirstPhysicalCamera() { return firstPhysicalCamera; } public List getSecondPhysicalCamera() { return secondPhysicalCamera; } } interface CameraCaptureSessionCallback { void callback(CameraCaptureSession cameraCaptureSession); } void createDualCameraSession(CameraManager cameraManager, DualCamera dualCamera, DualCameraOutputs targets, Executor executor, CameraCaptureSessionCallback cameraCaptureSessionCallback) { // Create 3 sets of output configurations: one for the logical camera, and // one for each of the physical cameras. List outputConfigsLogical = targets.getLogicalCamera().stream() .map(OutputConfiguration::new) .collect(Collectors.toList()); List outputConfigsPhysical1 = targets.getFirstPhysicalCamera().stream() .map(s -> { OutputConfiguration outputConfiguration = new OutputConfiguration(s); outputConfiguration.setPhysicalCameraId(dualCamera.physicalId1); return outputConfiguration; }) .collect(Collectors.toList()); List outputConfigsPhysical2 = targets.getSecondPhysicalCamera().stream() .map(s -> { OutputConfiguration outputConfiguration = new OutputConfiguration(s); outputConfiguration.setPhysicalCameraId(dualCamera.physicalId2); return outputConfiguration; }) .collect(Collectors.toList()); // Put all the output configurations into a single flat array List outputConfigsAll = Stream.of( outputConfigsLogical, outputConfigsPhysical1, outputConfigsPhysical2 ) .filter(Objects::nonNull) .flatMap(Collection::stream) .collect(Collectors.toList()); // Instantiate a session configuration that can be used to create a session SessionConfiguration sessionConfiguration = new SessionConfiguration( SessionConfiguration.SESSION_REGULAR, outputConfigsAll, executor, new CameraCaptureSession.StateCallback() { @Override public void onConfigured(@NonNull CameraCaptureSession cameraCaptureSession) { cameraCaptureSessionCallback.callback(cameraCaptureSession); } // Omitting for brevity... @Override public void onConfigureFailed(@NonNull CameraCaptureSession cameraCaptureSession) { cameraCaptureSession.getDevice().close(); } }); // Open the logical camera using the previously defined function openDualCamera(cameraManager, dualCamera, executor, (CameraDevice c) -> // Finally create the session and return via callback c.createCaptureSession(sessionConfiguration)); }

有关支持哪种流组合的信息,请参阅 createCaptureSession。组合流适用于单个逻辑摄像头上的多个流。兼容性扩展到使用相同的配置,并将其中一个流替换为来自属于同一逻辑摄像头的两个物理摄像头的两个流。

相机会话准备就绪后,调度所需的捕获请求。捕获请求的每个目标都从其关联的物理摄像头接收数据(如果正在使用),否则回退到逻辑摄像头。

变焦用例示例

可以将物理摄像头合并到单个流中,以便用户可以在不同的物理摄像头之间切换,以体验不同的视野,从而有效捕获不同的“变焦级别”。

首先选择一对物理摄像头,允许用户在其间切换。为了达到最大效果,您可以选择提供最小和最大可用焦距的摄像头对。

Kotlin

fun findShortLongCameraPair(manager: CameraManager, facing: Int? = null): DualCamera? { return findDualCameras(manager, facing).map { val characteristics1 = manager.getCameraCharacteristics(it.physicalId1) val characteristics2 = manager.getCameraCharacteristics(it.physicalId2) // Query the focal lengths advertised by each physical camera val focalLengths1 = characteristics1.get( CameraCharacteristics.LENS_INFO_AVAILABLE_FOCAL_LENGTHS) ?: floatArrayOf(0F) val focalLengths2 = characteristics2.get( CameraCharacteristics.LENS_INFO_AVAILABLE_FOCAL_LENGTHS) ?: floatArrayOf(0F) // Compute the largest difference between min and max focal lengths between cameras val focalLengthsDiff1 = focalLengths2.maxOrNull()!! - focalLengths1.minOrNull()!! val focalLengthsDiff2 = focalLengths1.maxOrNull()!! - focalLengths2.minOrNull()!! // Return the pair of camera IDs and the difference between min and max focal lengths if (focalLengthsDiff1 < focalLengthsDiff2) { Pair(DualCamera(it.logicalId, it.physicalId1, it.physicalId2), focalLengthsDiff1) } else { Pair(DualCamera(it.logicalId, it.physicalId2, it.physicalId1), focalLengthsDiff2) } // Return only the pair with the largest difference, or null if no pairs are found }.maxByOrNull { it.second }?.first }

Java

// Utility functions to find min/max value in float[] float findMax(float[] array) { float max = Float.NEGATIVE_INFINITY; for(float cur: array) max = Math.max(max, cur); return max; } float findMin(float[] array) { float min = Float.NEGATIVE_INFINITY; for(float cur: array) min = Math.min(min, cur); return min; } DualCamera findShortLongCameraPair(CameraManager manager, Integer facing) { return findDualCameras(manager, facing).stream() .map(c -> { CameraCharacteristics characteristics1; CameraCharacteristics characteristics2; try { characteristics1 = manager.getCameraCharacteristics(c.physicalId1); characteristics2 = manager.getCameraCharacteristics(c.physicalId2); } catch (CameraAccessException e) { e.printStackTrace(); return null; } // Query the focal lengths advertised by each physical camera float[] focalLengths1 = characteristics1.get( CameraCharacteristics.LENS_INFO_AVAILABLE_FOCAL_LENGTHS); float[] focalLengths2 = characteristics2.get( CameraCharacteristics.LENS_INFO_AVAILABLE_FOCAL_LENGTHS); // Compute the largest difference between min and max focal lengths between cameras Float focalLengthsDiff1 = findMax(focalLengths2) - findMin(focalLengths1); Float focalLengthsDiff2 = findMax(focalLengths1) - findMin(focalLengths2); // Return the pair of camera IDs and the difference between min and max focal lengths if (focalLengthsDiff1 < focalLengthsDiff2) { return new Pair<>(new DualCamera(c.logicalId, c.physicalId1, c.physicalId2), focalLengthsDiff1); } else { return new Pair<>(new DualCamera(c.logicalId, c.physicalId2, c.physicalId1), focalLengthsDiff2); } }) // Return only the pair with the largest difference, or null if no pairs are found .max(Comparator.comparing(pair -> pair.second)).get().first; }

一个合理的架构是拥有两个 SurfaceViews — 每个流一个。这些 SurfaceViews 根据用户交互进行切换,以便在任何给定时间只有一个可见。

以下代码演示了如何打开逻辑摄像头、配置摄像头输出、创建摄像头会话并启动两个预览流

Kotlin

val cameraManager: CameraManager = ... // Get the two output targets from the activity / fragment val surface1 = ... // from SurfaceView val surface2 = ... // from SurfaceView val dualCamera = findShortLongCameraPair(manager)!! val outputTargets = DualCameraOutputs( null, mutableListOf(surface1), mutableListOf(surface2)) // Here you open the logical camera, configure the outputs and create a session createDualCameraSession(manager, dualCamera, targets = outputTargets) { session -> // Create a single request which has one target for each physical camera // NOTE: Each target receive frames from only its associated physical camera val requestTemplate = CameraDevice.TEMPLATE_PREVIEW val captureRequest = session.device.createCaptureRequest(requestTemplate).apply { arrayOf(surface1, surface2).forEach { addTarget(it) } }.build() // Set the sticky request for the session and you are done session.setRepeatingRequest(captureRequest, null, null) }

Java

CameraManager manager = ...; // Get the two output targets from the activity / fragment Surface surface1 = ...; // from SurfaceView Surface surface2 = ...; // from SurfaceView DualCamera dualCamera = findShortLongCameraPair(manager, null); DualCameraOutputs outputTargets = new DualCameraOutputs( null, Collections.singletonList(surface1), Collections.singletonList(surface2)); // Here you open the logical camera, configure the outputs and create a session createDualCameraSession(manager, dualCamera, outputTargets, null, (session) -> { // Create a single request which has one target for each physical camera // NOTE: Each target receive frames from only its associated physical camera CaptureRequest.Builder captureRequestBuilder; try { captureRequestBuilder = session.getDevice().createCaptureRequest(CameraDevice.TEMPLATE_PREVIEW); Arrays.asList(surface1, surface2).forEach(captureRequestBuilder::addTarget); // Set the sticky request for the session and you are done session.setRepeatingRequest(captureRequestBuilder.build(), null, null); } catch (CameraAccessException e) { e.printStackTrace(); } });

剩下的就是为用户提供一个 UI,让他们在两个 Surface 之间切换,例如按钮或双击 SurfaceView。您甚至可以执行某种形式的场景分析并自动在两个流之间切换。

镜头畸变

所有镜头都会产生一定量的畸变。在 Android 中,您可以使用 CameraCharacteristics.LENS_DISTORTION 查询镜头产生的畸变,它取代了现在已弃用的 CameraCharacteristics.LENS_RADIAL_DISTORTION。对于逻辑摄像头,畸变最小,您的应用可以或多或少地直接使用来自摄像头的帧。对于物理摄像头,可能会有非常不同的镜头配置,尤其是在广角镜头上。

某些设备可能会通过 CaptureRequest.DISTORTION_CORRECTION_MODE 实现自动畸变校正。畸变校正对于大多数设备来说默认是开启的。

Kotlin

val cameraSession: CameraCaptureSession = ... // Use still capture template to build the capture request val captureRequest = cameraSession.device.createCaptureRequest( CameraDevice.TEMPLATE_STILL_CAPTURE ) // Determine if this device supports distortion correction val characteristics: CameraCharacteristics = ... val supportsDistortionCorrection = characteristics.get( CameraCharacteristics.DISTORTION_CORRECTION_AVAILABLE_MODES )?.contains( CameraMetadata.DISTORTION_CORRECTION_MODE_HIGH_QUALITY ) ?: false if (supportsDistortionCorrection) { captureRequest.set( CaptureRequest.DISTORTION_CORRECTION_MODE, CameraMetadata.DISTORTION_CORRECTION_MODE_HIGH_QUALITY ) } // Add output target, set other capture request parameters... // Dispatch the capture request cameraSession.capture(captureRequest.build(), ...)

Java

CameraCaptureSession cameraSession = ...; // Use still capture template to build the capture request CaptureRequest.Builder captureRequestBuilder = null; try { captureRequestBuilder = cameraSession.getDevice().createCaptureRequest( CameraDevice.TEMPLATE_STILL_CAPTURE ); } catch (CameraAccessException e) { e.printStackTrace(); } // Determine if this device supports distortion correction CameraCharacteristics characteristics = ...; boolean supportsDistortionCorrection = Arrays.stream( characteristics.get( CameraCharacteristics.DISTORTION_CORRECTION_AVAILABLE_MODES )) .anyMatch(i -> i == CameraMetadata.DISTORTION_CORRECTION_MODE_HIGH_QUALITY); if (supportsDistortionCorrection) { captureRequestBuilder.set( CaptureRequest.DISTORTION_CORRECTION_MODE, CameraMetadata.DISTORTION_CORRECTION_MODE_HIGH_QUALITY ); } // Add output target, set other capture request parameters... // Dispatch the capture request cameraSession.capture(captureRequestBuilder.build(), ...);

在此模式下设置捕获请求可能会影响相机可以产生的帧速率。您可以选择仅对静态图像捕获开启畸变校正。